Homework 11 - Due: 04/30

For minimum-norm optimal control see FDLS Section 22. For LQR and introduction to optimal control theory, see BMP, Sections 10.1–10.5, 11.1–11.2 and 11.4–11.5. For time-optimal control see Dr. Liberzon’s handout.

Problem 1

Consider the optimal control problem

Write down a partial differential equation for the optimal cost

Simplify the PDE by computing the minimum in it. Using this minimum calculation, write down an expression for the optimal control law in state feedback form. (This expression can contain partial derivatives of the optimal cost, evaluated along the optimal trajectory.)

Problem 2

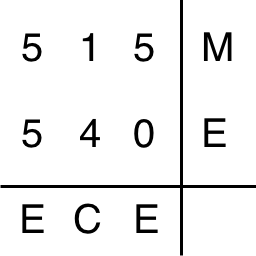

Consider the minimum-time parking problem discussed in class: bring a car modeled by the system

Problem 3

Consider the optimal control problem given by the system

Find the optimal cost function (also called the value function)

Write down the HJB equation for this problem, and simplify it by computing the minimum in it.

Does the optimal cost function from part (a) satisfy the HJB equation from part (b) everywhere?

Problem 4

Consider the LQR problem

Problem 5

Consider the infinite-horizon LQR problem

Find the optimal cost

Problem 6

The LQR theory can be extended to problems where the derivative of the control variable appears in the performance index (i.e., we penalize not only large control values but also sudden changes in it). Consider minimization of the cost function

subject to

- Assume

- Apply your solution to the specific problem where the dynamics and cost function are as follows:

Hint: For Part (b) your solution should have a control law of the form