Boaty McBoatface (from the BBC)

Boaty McBoatface (from the BBC)

So, now, let's assume that we have a clean sequence of words. By "word," I mean a chunk of a size that's convenient for later algorithms (e.g. parsing, translation). This might be the output of a speech recognizer. Or, because many natural language systems don't start with speech, the stream of words might be (cleaned-up) written text.

These words then need to be divided into morphemes by a "morphology" algorithm. Words in some languages can get extremely long (e.g. Turkish), making it hard to do further processing unless they are (at least partly) subdivided into morphemes. For example:

unanswerable --> un-answer-able

preconditions --> pre-condition-s

Or, consider the following well-known Chinese word:

Zhōng + guó

In modern Chinese, this is one word ("China"). And our AI system would likely be able to accumulate enough information about China by treating it as one two-syllable item. But it is historically (and in the writing system) made from two morphemes ("middle" and "country"). It is what's called a "transparent compound," i.e. a speaker of the language would be aware of the separate pieces. This knowledge of the internal word structure would allow a human to guess that a less common word ending in "guo" might also be the name of a country.

Sometimes related words are formed by processes other than concatenation, e.g. the internal vowel change in English "foot" vs. "feet". When this is common in a language, we may wish to use a more abstract feature-based representation, e.g.

foot --> foot+SINGULAR

feet --> foot+PLURAL

Sometimes the appropriate division depends on the application. So the English possessive ending "'s" is traditionally written as part of the preceding word. However, it is often convenient to split it off as a separate word in later processing.

In order to group words into larger units (e.g. prepositional phrases), the first step is typically to assign a "part of speech" (POS) tag to each word. Here some sample text from the Brown corpus, which contains very clean written text.

Northern liberals are the chief supporters of civil rights and of integration. They have also led the nation in the direction of a welfare state.

Here's the tagged version. For example, "liberals" is an NNS which is a plural noun. "Northern" is an adjective (JJ). Tag sets need to distinguish major types of words (e.g. nouns vs. adjectives) and major variations e.g. singular vs plural nouns, present vs. past tense verbs. There are also some ad-hoc tags key function words, such as HV for "have," and punctuation (e.g. period).

Northern/jj liberals/nns are/ber the/at chief/jjs supporters/nns of/in civil/jj rights/nns and/cc of/in integration/nn ./. They/ppss have/hv also/rb led/vbn the/at nation/nn in/in the/at direction/nn of/in a/at welfare/nn state/nn ./.

Most words have only one common part of speech. So we can get up to about 91% accuracy using a simple "baseline" algoirthm that always guesses the most common part of speech for each word. However, some words have more than one possible tag, e.g. "liberal" could be either a noun or an adjective. To get these right, we can use algorithms like hidden Markov models (next lecture). On clean text, a highly tuned POS tagger can get about 97% accuracy. In other words, POS taggers are quite reliable and mostly used as a stable starting place for further analysis.

Many useful systems can be built using only a simple local analysis of the word stream, e.g. words, word bigrams, morphemes, POS tags, perhaps a shallow parse (see below). These algorithms typically make a "Markov assumption," i.e. assume that only the last few items matter to the next decision. E.g.

Specific techniques include finite-state automata, hidden Markov models (HMMs), and recurrent neural nets (RNNs). We'll see hidden Markov models later in this course.

One very old type of shallow system is spelling correction. Useful upgrades (which often work) include detection of grammar errors, adding vowels or diacritics in language (e.g. Arabic) where they are often omitted. Cutting-edge research problems involve automatic scoring of essay answers on standardized tests. Speech recognition has been used for evaluating English fluency and children learning to read (e.g. aloud). Success depends on very strong expectations about what the person will say.

Translation is often done by shallow algorithms. To learn how to do translation, we might align pairs of sentences in different languages, matching corresponding words.

English: 18-year-olds can't buy alcohol.

French: Les 18 ans ne peuvent pas acheter d'alcool

| 18 | year | olds | can | 't | buy | alcohol | |||

| Les | 18 | ans | ne | peuvent | pas | acheter | d' | alcool |

Notice that some words don't have matches in the other language. For other pairs of languages, there could be radical changes in word order.

A corpus of matched sentence pairs can be used to build translation dictionaries (for phrases as well as words) and extract general knowledge about changes in word order.

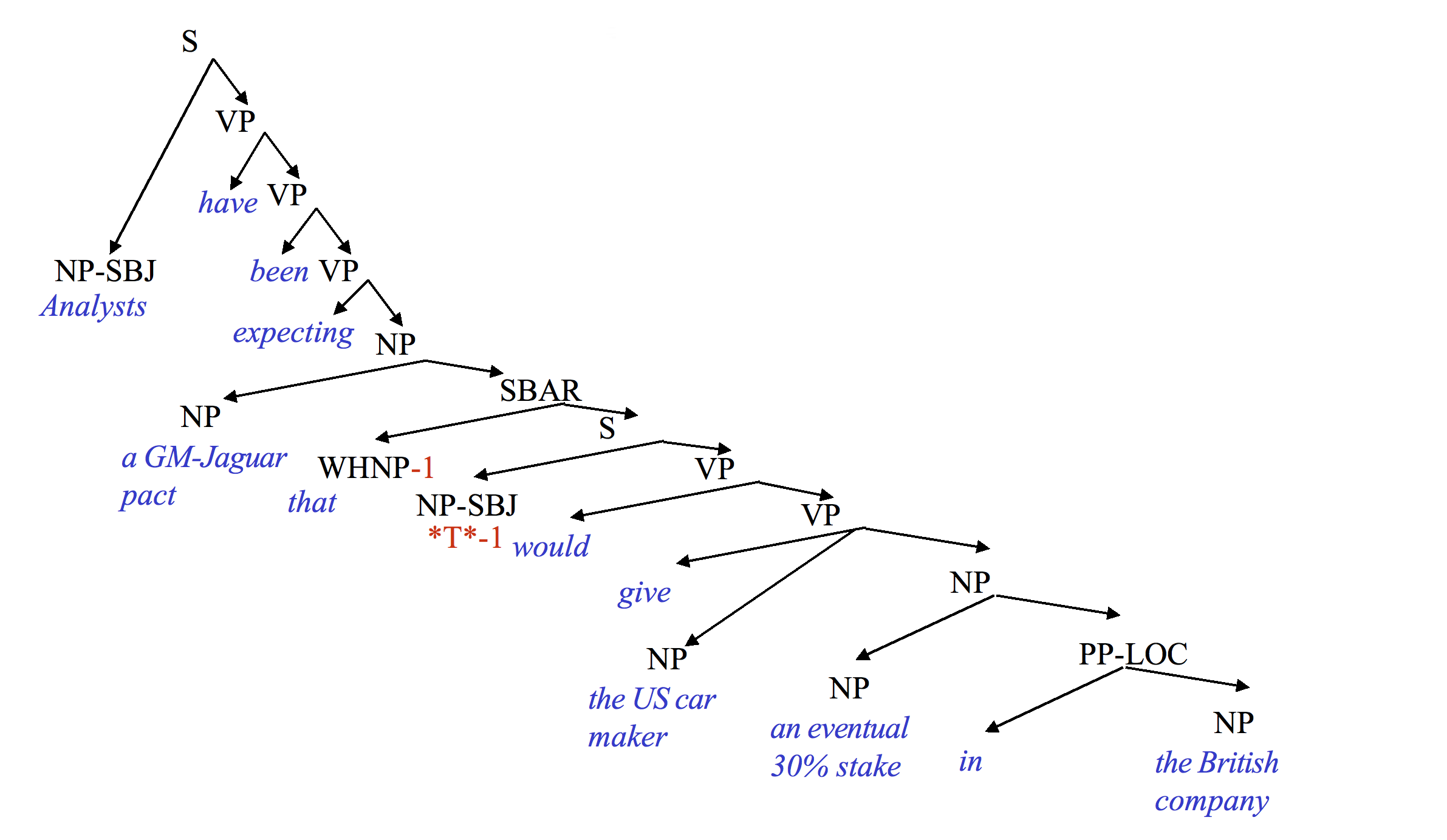

One we have POS tags for the words, we can assemble the words into a parse tree. There's many styles from building parse trees. Here is a constituency tree from the Penn treebank (from Mitch Marcus).

Penn treebank style parse tree (from Mitch Marcus)

Penn treebank style parse tree (from Mitch Marcus)

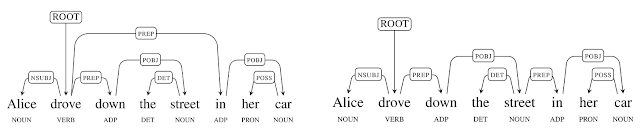

An alternative is a dependency tree like the following ones below from Google labs. A fairly recent parser from them is called "Parsey McParseface," after the UK's Boaty McBoatface shown above.

In this example, the lefthand tree shows the correct attachment for "in her car," i.e. modifying "drove." The righthand tree shows an interpretation in which the street is in the car.

Linguists (computational and otherwise) have extended fights about the best way to draw these trees. However, the information encoded is always fairly similar and primarily involves grouping together words that form coherent phrases e.g. "a welfare state." It's fairly similar to parsing computer programming languages, except that programming languages have been designed to make parsing easy.

A "shallow parser" builds only the lowest parts of such a tree. So it might group words into noun phrases, prepositional phrases, and complex verbs (e.g. "is walking"). Extracting these phrases is much easier than building the whole parse tree, and can be extremely useful for building shallow systems.

Like low-level algorithms, parsers often make their decisions using only a small amount of prior (and perhaps lookahead) context. However, "one item" of context might be a whole chunk of the parse tree. For example, "the young lady" might be considered a single unit. Parsing algorithms must typically consider a wide space of options, e.g. many options for a partly-built tree. So they typically use beam search, i.e. keep only a fixed number of hypotheses with the best rating. Newer methods also try to share tree structure among competing alternatives (like dynamic programming) so they can store more hypotheses and avoid duplicative work.

Parsers fall into three categories

The value of lexical information is illustrated by sentences like this, in which changing the noun phrase changes what the prepositional phrase modifies:

She was going down a street ..

in her truck. (modifies going)

in her new outfit. (modifies the subject)

in South Chicago. (modifies street)

The best parsers are lexicalized (accuracies up to 94% from Google's "Parsey McParseface" parser). But it's not clear how much information to include about the words and their meanings. For example, should "car" always behaves like "truck"? More detailed information helps make decisions (esp. attachment) but requires more training data.

Notice that the tag set for the Brown corpus was somewhat specialized for English, in which forms of "have" and "to be" play a critical syntactic role. Tag sets for other languages would need some of the same tags (e.g. for nouns) but also categories for types of function words that English doesn't use. For example, a tag set for Chinese or Mayan would need a tag for numeral classifiers, which are words that go with numbers (e.g. "three") to indicate the approximate type of object being enumerated (e.g. "table" might require a classifier for big flat objects). It's not clear whether it's better to have specialized tag sets for specific languages or one universal tag sets that includes major functional categories for all languages.

Tag sets vary in size, depending on the theoretical biases of the folks making the annotated data. Smaller sets of labels convey only basic information about word type. Larger sets include information about what role the word plays in the surrounding context. Sample sizes

Conversational spoken language also includes features not found in written language. In the example below (from the Switchboard corpus), you can see the filled pause "uh", also a broken off word "t-". Also, notice that the first sentence is broken up by a paranthetical comment ("you know") and the third sentence trails off at the end. Such features make spoken conversation harder to parse than written text.

I 'd be very very careful and , uh , you know , checking them out . Uh , our , had t- , place my mother in a nursing home . She had a rather massive stroke about , uh, about ---

I/PRP 'd/MD be/VB very/RB very/RB careful/JJ and/CC ,/, uh/UH ,/, you/PRP know/VBP ,/, checking/VBG them/PRP out/RP ./. Uh/UH ,/, our/PRP$ ,/, had/VBD t-/TO ,/, place/VB my/PRP$ mother/NN in/IN a/DT nursing/NN home/NN ./. She/PRP had/VBD a/DT rather/RB massive/JJ stroke/NN about/RB ,/, uh/UH ,/, about/RB --/:

Representation of meaning is less well understood. In modern systems, meaning of individual word stems (e.g. "cat" or "walk") is based on their observed context. We'll see details later in the term. But these meanings are not tied, or are only weakly tied, to the physical world. Methods for combining the meanings of individual words into a single meaning (e.g. "the red cat") are similarly fragile. Very few systems attempt to understand sophisticated constructions involving quantifiers ("How many arrows didn't hit the target?") or relative clauses. (An example of a relative clause is "that would give ..." in the Penn treebank parse example above.)

A specific issue for even simple applications is that negation is easy for humans but difficult for computers. For example, a google query for "Africa, not francophone" returns information on French-speaking parts of Africa. And this circular translation example shows Google making the following conversion which reverses the polarity of the advice.

Applications that can work well with limited understanding include

Three types of shallow semantic analysis have proved useful and almost within current capabilities:

Semantic role labelling involves deciding how the main noun phrases in a clause relate to the verb. For example, in "John drove the car," "John" is the subject/agent and "the car" is the object being driven. These relationships aren't always object, e.g. who is eating who in a "truck eating bridge"? (Google it.)

Right now, the most popular representation for word classes is a "word embedding." Word embeddings give each word a unique location in a high dimensional Euclidean space, set up so that similar words are close together. We'll see the details later. A popular algorithm is word2vec.

Running text contains a number of "named entities," i.e. nouns, pronouns, and noun phrases referring to people, organizations, and places. Co-reference resolution tries to identify which named entities refer to the same thing. For example, in this text from Wikipedia, we have identified three entities as referring to Michelle Obama, two as Barack Obama, and three as places that are neither of them. One source of difficulty is items such as the last "Obama," which looks superficially like it could be either of them.

[Michelle LaVaughn Robinson Obama] (born January 17, 1964) is an American lawyer, university administrator, and writer who served as the [First Lady of the United States] from 2009 to 2017. She is married to the [44th U.S. President], [Barack Obama], and was the first African-American First Lady. Raised on the South Side of [Chicago, Illinois], [Obama] is a graduate of [Princeton University] and [Harvard Law School].

From a very young age, people have a strong ability to manage interactions over a long period of time. E.g. we can keep talking about a coherent theme (e.g. politics) for an entire evening. We can ask follow-on questions to build up a mental model in which all the pieces fit together. We expect all the pieces to fit together when reading a novel. Even the best modern AI systems cannot yet do this.

One specific task is "dialog coherency." For example, suppose you ask Google home a question like "How many calories in a banana?" You might like to follow on with "How about an orange?" But most of these systems process each query separately. The exceptions can keep only a very short theory of context or (e.g. customer service systems) work in a very restricted domain. A more fun example of maintaining coherency in a restricted domain was the ILEX system for providing information about museum exhibits. ( original paper link)

The recent neural net system GPT-3 has a strong ability to maintain local coherency in its generated text and dialogs. However, this comes at a cost. Because it has no actual model of the world or its own beliefs, it can be led astray by leading questions.