Probability 2

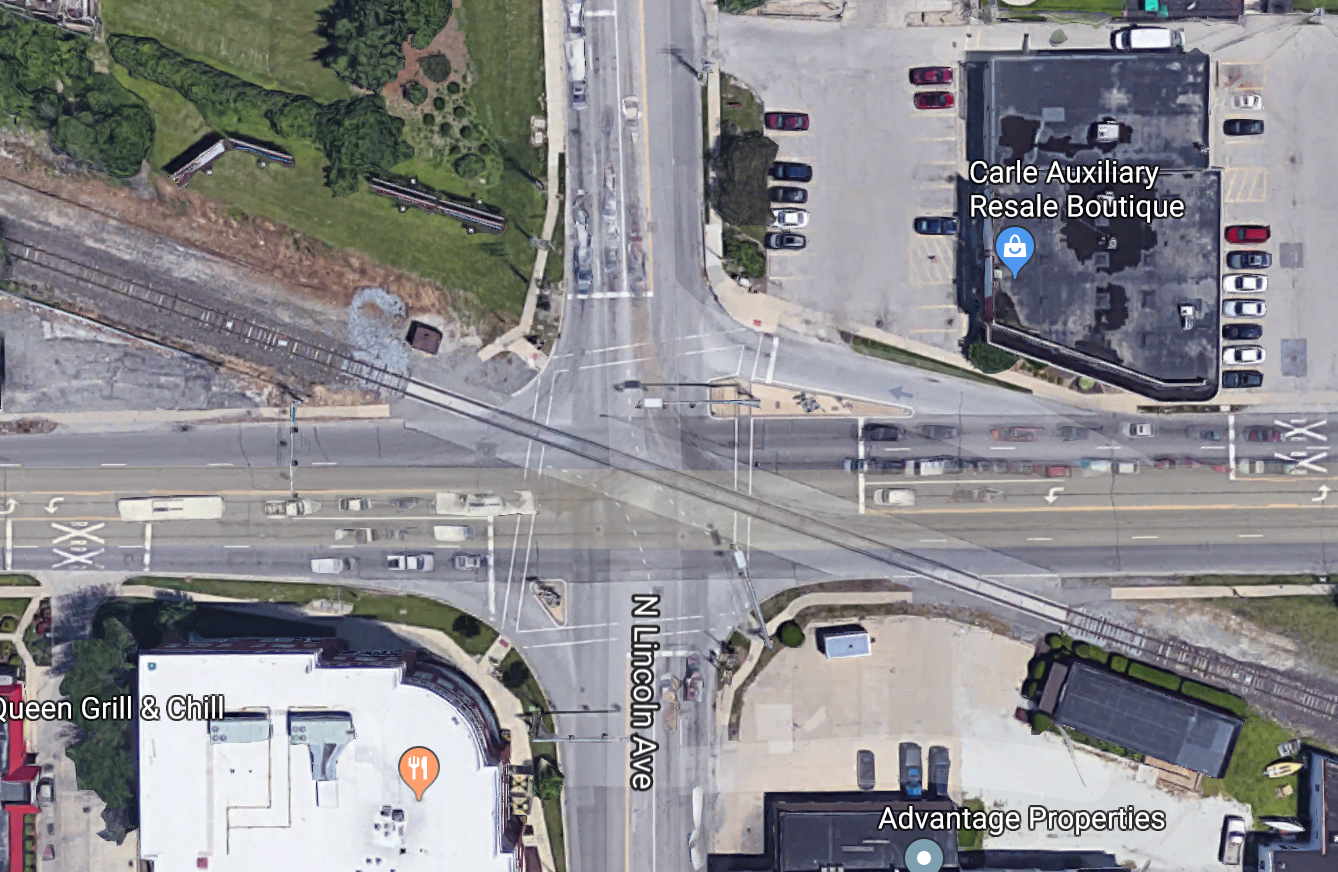

Probability 2 Here's a model of two variables for the University/Goodwin intersection:

E/W light

green yellow red

N/S light green 0 0 0.2

yellow 0 0 0.1

red 0.5 0.1 0.1

To be a probability distribution, the numbers must add up to 1 (which they do in this example).

Most model-builders assume that probabilities aren't actually zero. That is, unobserved events do occur but they just happen so infrequently that we haven't yet observed one. So a more realistic model might be

E/W light

green yellow red

N/S light green e e 0.2-f

yellow e e 0.1-f

red 0.5-f 0.1-f 0.1-f

To make this a proper probability distribution, we need to set

f=(4/5)e so all the values add up to 1.

Suppose we are given a joint distribution like the one above, but we

want to pay attention to only one variable. To get its distribution,

we sum probabilities across all values of the other variable.

So the marginal distribution of the N/S light is

To write this in formal notation

suppose Y has values \( y_1 ... y_n \). Then

we compute the marginal probability P(X=x) using the formula

\( P(X=x) = \sum_{k=1}^n P(x,y_k) \).

Suppose we know that the N/S light is red, what are the probabilities for the

E/W light? Let's just extract that line of our joint distribution.

So we have a distribution that looks like this:

Oops, these three probabilities don't sum to 1. So this isn't a legit probability

distribution (see Kolmogorov's Axioms above). To make them sum to 1,

divide each one by the sum they currently have (which is 0.7). This gives

us

Conditional probability models how frequently we see each variable value in some context

(e.g. how often is the barrier-arm down if it's nighttime).

The conditional probability of A in a context C is defined to be

Many other useful formulas can be derived from this definition plus

the basic formulas given above. In particular, we can transform

this definition into

These formulas extend to multiple inputs like this:

Two events A and B are independent iff

It's equivalent to show that this equation is equivalent to

each of the following equations:

Exercise for the reader: why are these three equations all equivalent? Hint: use

definition of conditional probability.

Figure this out for yourself, because it will help you become familiar with the

definitions.

E/W light marginals

green yellow red

N/S light green 0 0 0.2 0.2

yellow 0 0 0.1 0.1

red 0.5 0.1 0.1 0.7

-------------------------------------------------

marginals 0.5 0.1 0.4

P(green) = 0.2

P(yellow) = 0.1

P(red) = 0.7

Conditional probabilities

E/W light

green yellow red

N/S light red 0.5 0.1 0.1

P(E/W=green | N/S = red) = 0.5

P(E/W=yellow | N/S = red) = 0.1

P(E/W=red | N/S = red) = 0.1

P(E/W=green | N/S = red) = 0.5/0.7 = 5/7

P(E/W=yellow | N/S = red) = 0.1/0.7 = 1/7

P(E/W=red | N/S = red) = 0.1/0.7 = 1/7

Conditional probability equations

P(A | C) = P(A,C)/P(C)

P(A,C) = P(C) * P(A | C)

P(A,C) = P(A) * P(C | A)

P(A,B,C) = P(A) * P(B | A) * P(C | A,B)

Independence

P(A,B) = P(A) * P(B)

P(A | B) = P(A)

P(B | A) = P(B)