Let's see some common patterns for recursive running times. We'll analyze recursive code in two steps:

FindMax($a_1,\ldots,a_n$) # input is an array of n integersif (n=1) return $a_1$

elsem = $\lfloor \frac{n}{2}\rfloor$

p = FindMax($a_1,\ldots,a_m$)

q = FindMax($a_{m+1},\ldots,a_n$)

return max(p,q)

We have two recursive calls, each to a half-size problem. The extra work is constant. So our recursive running time definition looks like

The recursion tree has height $\log_2 n$. So the number of leaves and also the number of interior nodes is $O(2^{\log_2 n}) = O(n)$. There's constant work at each leaf (c) and also constant work at each interior node (d). So the whole algorithm is $O(n)$.

What happens if we store our values in a linked list, rather than an array?

FindMax($a_1,\ldots,a_n$) # input is a linked list of n integersif (n=1) return $a_1$

elsem = $\lfloor \frac{n}{2}\rfloor$

p = FindMax($a_1,\ldots,a_m$)

q = FindMax($a_{m+1},\ldots,a_n$)

return max(p,q)

We still have two recursive calls, each to a half-size problem. However, the extra work is now O(n) because we have to walk halfway down a linked list to split it in half. So our recursive running time definition looks like

This is the mergesort recurrence and we know from the textbook that its closed form is $O(n\log n)$. Headline bits of that recursion tree analysis:

Binary search is an efficient way to find something in an array that has already been arranged in some known pattern, usually sorted somehow.

Suppose we have an arrange that contains a sequence of non-zero values, followed by some number of zeroes. Here is a function FindEnd with helper function FindEndRec that finds the last non-zero value.

FindEnd(A: array of numbers)mylen = length(A)

if (mylen < 2) error

else if (A[0] = 0]) error

else if (A[mylen-1] $\not =$ 0) return mylen-1

else return FindEndRec(A,0,mylen-1)

# input should have length at least 2

# caller should verify that A[bottom] is non-zero and A[top] = 0

FindEndRec(A, bottom, top)if (top=bottom+1) return bottom

middle = $\lfloor{\frac{{\textrm bottom} + \textrm{top}}{2}}\rfloor$

if (A[middle] = 0)return FindEndRec(A,bottom, middle)elsereturn FindEndRec(A,middle,top)

This code splits the array in half and calls itself recursively on only one of the two halves. So, for the running time analysis, we have one recursive call with a half-size problem. And everything else in both functions takes constant time.

T(1) = c

T(n) = T(n/2) + d

The recursion tree has height \(O(\log n\)) and it doesn't branch. So the closed form is \(O(\log n\)).

Here'a a function that finds the smallest element in a list of numbers.

FindMin($a_1,\ldots,a_n$: linked list of numbers)if (n=1) return $a_1$

else return min($a_1$,FindMin($a_2,\ldots,a_n$)

Findmin is generally similar to findend. But notice that the input to the recursive call is only one element shorter than the original input. So the running time function is:

T(1) = c

T(n) = T(n-1) + d

The recursion tree still doesn't branch, but now its height is O(n). So the function's running time is O(n).

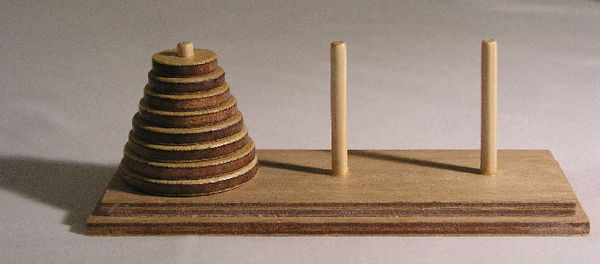

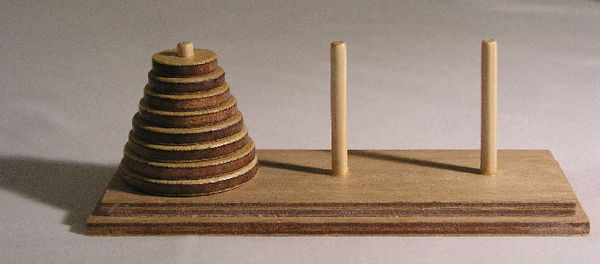

If the problem size decreases only slowly but the recursion tree branches, it's all over for efficiency. For example, consider the Towers of Hanoi puzzle:

The goal is to move the tower of rings onto another peg. You may only move one ring at a time. And a ring may not be put on top of a smaller ring. Watch this demo or this demo of the recursive solution.

Here's pseudo code for the solution:

# Hanoi moves all $n$ disks from peg $A$ to peg $B$

Hanoi($A$, $B$, $C$: pegs, $d_1,\ldots,d_n$:disks)if (n=1) move $d_1$ from $A$ to $B$

elseHanoi($A$, $C$, $B$, $d_1,\ldots,d_{n-1}$)

move $d_n$ from $A$ to $B$

Hanoi($A$, $C$, $B$, $d_1,\ldots,d_{n-1}$)

The first recursive call moves everything except the largest ring onto a temporary storage peg (C), using the magic of recursion. Then we move the largest ring to peg B. Finally the second recursive call moves the rest of the pile onto B.

We have two recursive calls. The problem size for each recursive call is one disk smaller than the original problem. So our recursive running time definition looks like this:

T(1) = c

T(n) = 2T(n-1) + d

If you solve this using either unrolling or a recursion tree, you'll discover that it is \(O(2^n)\). ☹

Headlines of recursion tree analysis: